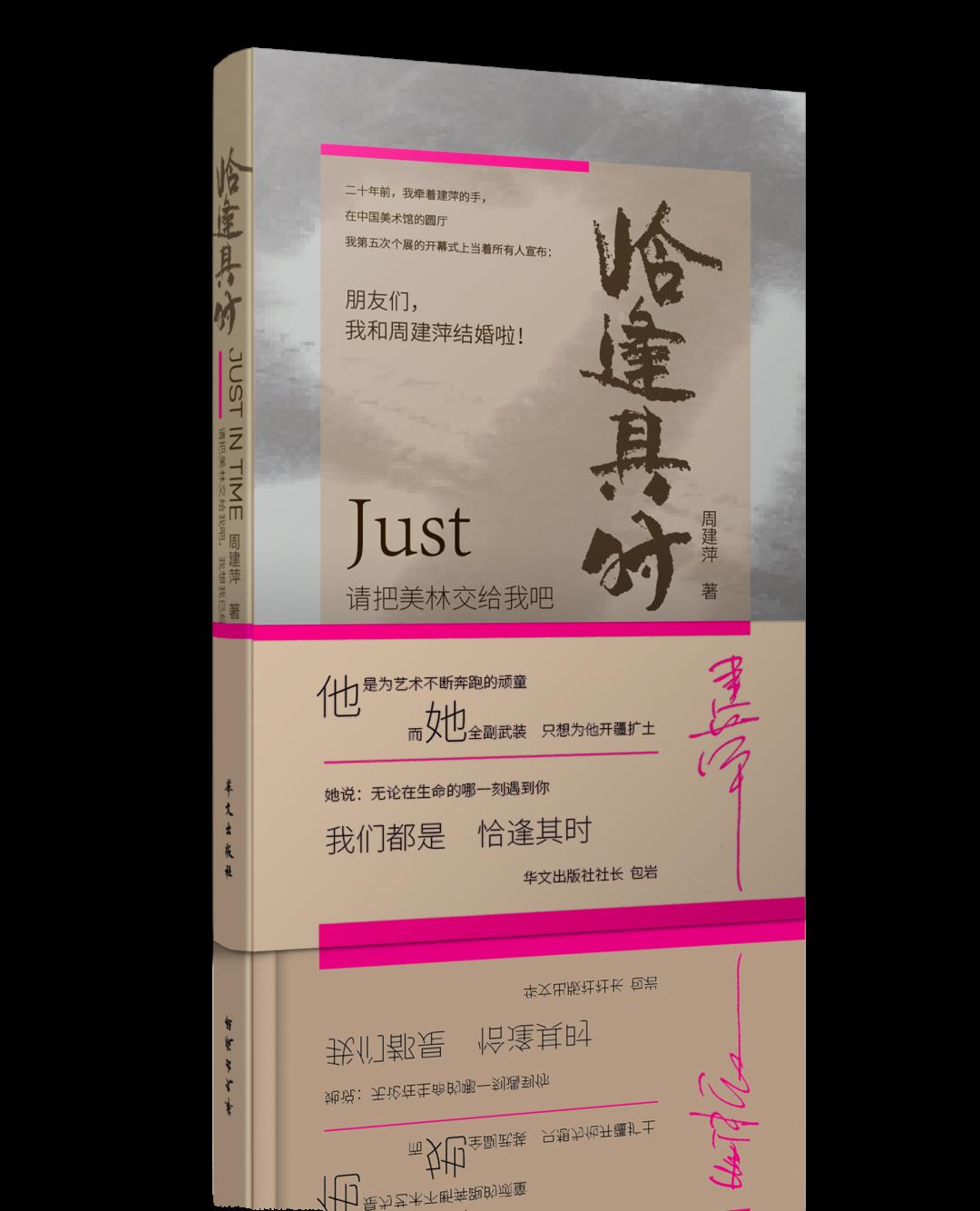

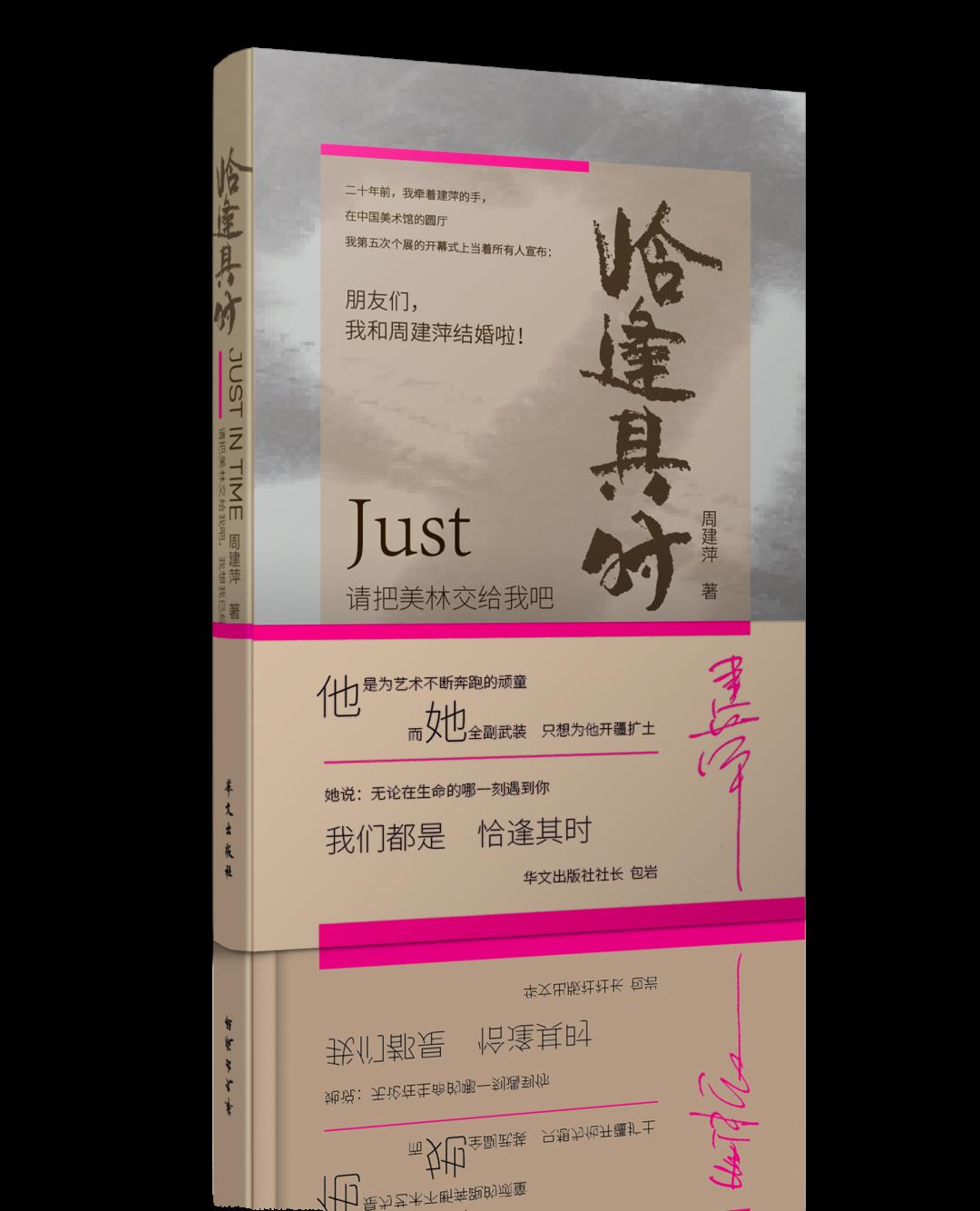

In 2022, Zhou Jianping’s three new books, Just in Time, Closed Couples and Beautiful Life, were published. She expounded an artist’s life track, creative process and struggle process from the perspective of his wife.

The full text of Love Bonus (I) in Just in Time is now published for readers.

Just in time

Zhou Jianping

Just in Time is Zhou Jianping’s account of his emotional experience and career struggle with Han Meilin from the perspective of his wife and partner. Through fourteen documentary articles, this book describes in detail Ms. Zhou Jianping and her team’s mental journey of defending and developing Han Meilin art with "the power of the wild", their "five children" — — The birth and growth of four Han Meilin art galleries and his son Han Tianyu, and a series of highlights in Han Meilin’s global tour at the age of 80, and so on, to pay tribute to their 20-year marriage.

Love Bonus (I)

If we use the "profit" in the financial concept,

Metaphor "profit" from love,

Then the "love dividend"

It is more appropriate to translate it into "ProfitofLove".

▲ Happiness practice

I spent the longest time with Merrill Lynch in our marriage, from 2001 to 2021, a full twenty years. Unfortunately, we met late, otherwise it should be no problem to reach the golden wedding or even the diamond wedding all the way. Merrill Lynch often tells me why he didn’t know me earlier. I said, that’s fate.

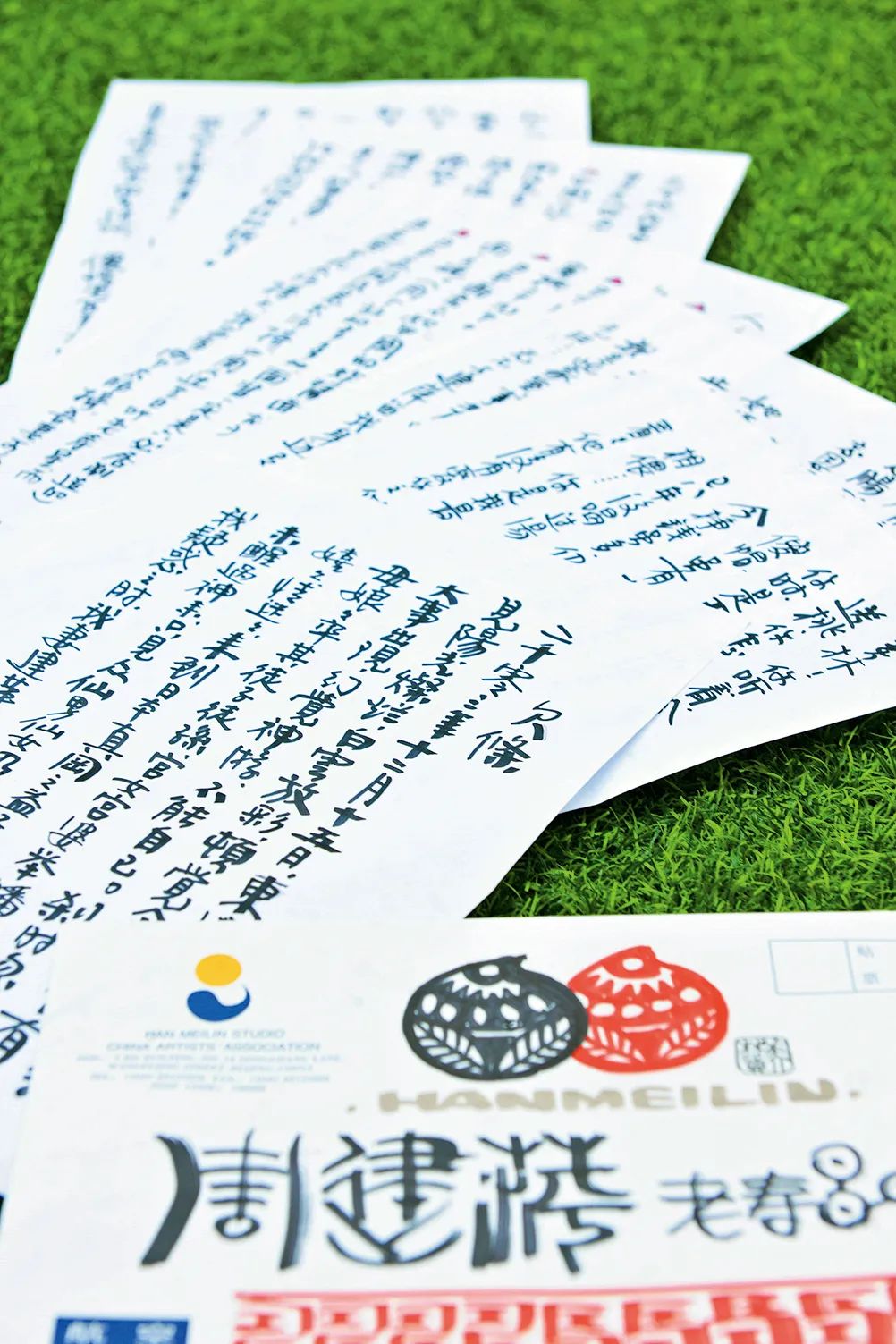

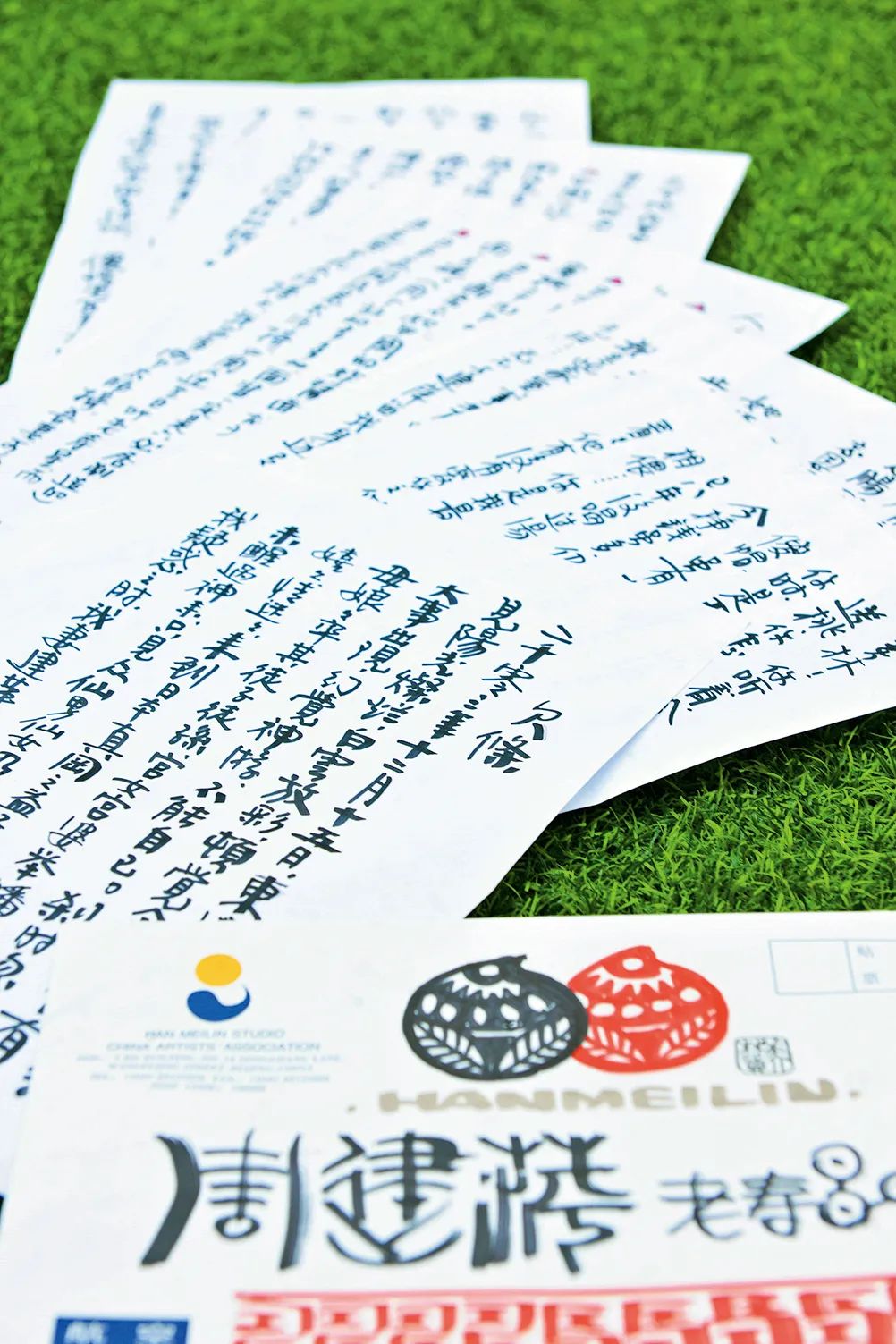

A few days ago, I accidentally dug up the "IOU" that Merrill Lynch wrote to me on my birthday in 2003 when she was creating ceramics in Masaoka Yoshiko-CHO, Japan.

On December 15, 2003, I crossed to Japan. In the morning, the sun is shining and the white clouds are shining, and I feel that something important is happening under it today. I can’t help myself. Suddenly, I saw the Queen Mother leading her disciples and grandsons, ladies-in-waiting, smiling and fascinated, and came to Yoshiko-machi, Zhenoka, Japan & HELIP; … I didn’t wake up until all the fairies and fairies left piles of flat peaches and left. When I was puzzled, my wife Jianping turned over and shouted, Han Meilin! Listen, today is my mother’s birthday, and the heavenly queen has come to send peaches. Why are you still as stupid as wood? When I first married you, it was a dream entrusted to me by the Queen Mother. She said: There is a big stupid hat in the world. Although it is brilliant, it has no aura, which makes people feel sick and diarrhea. Although I have made a lot of money so far, I still have no money. Clothes are patched and meals are cooked. He hasn’t had soup for eight years, no dining table for ten years and no affection for him for twenty years … … You are my youngest daughter. Can you go to Beijing to see if he loves you? If so, you can stay and help him. Although he is a fool, he is also a son of heaven, a bodhisattva with a heart and a rough life. He never forgets the poor, the benefactor, the long years and the mentor … … You go! He is changing his life in Fuwai Hospital … …

I have been married to Jianping for three years, and I can’t care how much I owe her. She is handsome and I am handsome, so I often make my wife laugh and wipe away tears. I’m sixty and seven years old, and I’m a good wife in my old age. Looking up to the sky, I realize: Am I still in a chaotic world? ! Bite your tongue — — It’s true. I have this family, this brilliant career, so many friends and so much energy … … All have something to do with Jianping being by my side. I am the luckiest person in the world, and thousands of envious and blessed eyes care about us.

There is no flower seller in Yoshiko-CHO, Japan, and I don’t buy Japanese flowers, so I owe my wife ninety-nine red roses.

Japanese peaches can live long without China peaches, so I owe my wife thirty-nine peaches.

Japanese noodles are not as good as Zhajiang Noodles in China, so I owe my wife Zhajiang Noodles a big bowl (there are a lot of diced meat, and the noodle sauce must be made by Liubiju).

I haven’t seen a plush chair in Masaoka, Japan. People in China are called "Longevity" on their birthdays, and they must sit in plush chairs. My family has two fauteuil chairs. My wife can sit in one chair during the day and the second chair at night. When she is tired, her legs will circle, and her legs will not be numb and her waist will not be sore for at least four or five hours.

China people have to have many children to join in the fun on their birthdays. There are not many children in this Japanese town, and the population here is negative. For this reason, I owe my wife and children a lot. When I return to China, I must call Dānlín and Dekuan, a pimple called Nizi. It’s really not enough. I went to the canteen to discuss with the master to lend out Little Red, who swept the floor. She is only 1.4 meters tall. When she was a child, no one said she was an adult. If the birthday girl — — Jianping doesn’t think there are too many children, so I must try to call the director of Liyuan police station and search for a few of them in Yunjing (but on one condition: I can sing, happy birthday to you).

If Zhou Jianping, the old birthday star, is not satisfied, he can invite no more than three dog-headed strategists, such as Uncle Shi and others, to come up with ideas (bad ideas, bad ideas, whatever). As long as the old birthday girl is happy, everything is nothing. But it’s China people’s birthdays, and it’s the birthday girl who pays for it and treats them to longevity noodles. The small vault of the old birthday girl must be relaxed, and two stacks should be taken out to invite the birthday party, and the pig eight quit to eat distiller’s grains — — Drunk and full.

I owe too much, please ask the birthday girl to add a few more.

Stupid husband Merrill Lynch

Done at Makoka, Japan on December 15th, 2003.

This is my daily life with Merrill Lynch. In the past twenty years, the reason why I have lived a "honey mixed with oil" life with Merrill Lynch is only because he is a person with interesting personality and soul.

▲ IOUs for Zhou Jianping’s birthday in 2002.

All encounters in the world are reunions after a long separation, and the arrival of our youngest son is indeed a gift from God. Therefore, Teacher Feng Jicai named our youngest son Han Tianyu.

Our family has also become more complete because of the arrival of Tian Yu, although he came late.

Tian Yu’s aura is really big, and he went to the hot search less than two months after he was born. He really should go on the hot search, because it’s really not easy for him all the way.

Before Tianyu was born, my secretary helped me download an APP called "Pro-Baby" on my mobile phone, and suggested that I upload photos or videos about my baby every day after he was born, so as to leave precious memories for my child in the future. On the afternoon when we were going to take our children back to China, the red sunset lit up the sky. I pulled Merrill Lynch with my children in my arms and asked the secretary to record a video for us, wishing my son to come to the world and wish him to return to his motherland soon.

Before boarding the plane, I shared this happiness with several of our friends. Perhaps my friends couldn’t hold back their joy and shared this seemingly "official announcement" video to some unknown WeChat group. This news is like a flood that overflowed the dike. Our plane hasn’t landed in Beijing yet, and this video has been searched hot. After all this trepidation, I think, isn’t this good news worthy of everyone’s blessing?

I remember one morning in 2005, when the fragrance of osmanthus fragrans was fragrant in Hangzhou Botanical Garden, Meilin and I were preparing to go out from Hanmeilin Art Museum in Hangzhou, and a group of aunts surrounded our car. We quickly rolled down the window, only to hear the aunts say loudly: "We have nothing to do, I hope you have a little Han Meilin."

This sentence has been lingering in my ear.

In fact, in 2005, Merrill Lynch and I were pregnant with a child. Because of my status as a civil servant, according to the family planning policy at that time, if the child was born, not only would I have to be fined and resign myself, but also the relevant leaders of our unit would have to reduce their salary by one level.

Considering that the blueprint for Han Meilin’s art career was just opened at that time, considering that my parents were more concerned about my status as a civil servant, and considering that Merrill Lynch’s daughter Xiaocao just came of age and my own son knew that he was underage … … Think before and after, can only reluctantly give up. Later, when someone mentioned "Little Han Meilin" again, my heart would ache faintly.

With the career going upstairs, parents’ release, and son’s adulthood … … In my heart, the expectation of having a child for Merrill Lynch is getting stronger and stronger. But as Merrill Lynch and I get older, I still set foot on the road of IVF without hesitation. According to my personality, as long as I know the right way, no matter how difficult it is, I will go on.

In ten years, only I know how difficult this road is. In the first seven years, I basically made progress by groping, took many detours and even suffered many blows. Because I used hormones, I gained ten kilograms. Until I met a senior international IVF (in vitro fertilization technology, commonly known as "in vitro fertilization") doctor, he said that in order to ensure the quality of eggs and minimize physical harm, he suggested that I take eggs in the natural cycle. For this dream, I took it for three years. In order to save time, I became intimate with a young female B-ultrasound doctor in a hospital near our home. She monitored the accuracy of follicles and amazed IVF doctors. In the past three years, several dreams that I accompanied failed to persist and gave up halfway. Only I have been on the road.

In fact, on the road of IVF, taking eggs is the first step of the long March, and then it has to go through various difficult processes such as embryo cultivation, transplantation, monitoring, inoculation and production.

I can’t forget that on a rainy autumn day, I experienced five failures and went to Lingyin Temple accompanied by Zhu Xuebin, the curator of Hangzhou. After seeing off Guanyin, my mood once collapsed and I couldn’t afford to kneel for a long time.

Aren’t the three Han Meilin art galleries our "children"? God loves us enough, we should be satisfied! A book called "Selfish Genes" wrote: "Because of the emergence of culture, the ultimate task of our life will not only be reproduction, but also the creation and inheritance of culture." Any great man’s genes are only a few generations, but his thoughts and works are still celebrated hundreds of years later. This is called "Mime" in the book and can be regarded as a cultural gene. Therefore, there are many ways to realize the value of life, and you don’t have to pass on your own biological genes.

I once discussed with Merrill Lynch to give up having children, but the words of our good friend, Mr. Lisa Lu, gave me renewed courage. She said that on June 20, 2012, she was filming in Dayong City, Hunan Province, and there was a "begging cave" there, which was said to be very effective. Teacher Lisa Lu put a begging lock in the "begging cave" to pray for Merrill Lynch and me, and has been praying for us ever since. She suggested that we should not be discouraged and continue to work hard.

After listening to teacher Lu, I decided to try again for the last time.

This time, it worked!

On January 13, 2018, Hanmen added Ding, and the sky came. He is lucky because he has a father who can be proud of all his life — — Han Meilin. His arrival can be described as taking advantage of the favorable weather, geographical location and people. The country has opened the second child policy, Merrill Lynch’s career is booming, the three Han Meilin art galleries are developing steadily, and the horn of the global tour has sounded … … It’s just the right time.

▲ Heaven is coming.

Our good friend Wei Minglun sent a congratulatory message:

Liang Hao was the top scholar at the age of 80.

Merrill Lynch is 82 years old!

Today’s story goes down in history!

Our good friend Yang Long saw Tian Yu’s photo and said:

The heaven is full and talented.

Two ears thick paste, lovely and amiable.

Eyes are black and white, and love and hate are distinct.

Have a good nose and a kind heart.

On the morning of the thirty-eighth day of Tianyu’s birth, Merrill Lynch began all kinds of tossing. He took out a vest he had worn, and found a sock given by Tian Yu. After sewing the sock on the vest, he wrote the pinyin given by Tian Yu under the sock, cut a number of "38" and designed a Logo for decoration, so he pieced together a "Tian Yu brand jersey" with these elements. Merrill Lynch wore this "No.38 jersey" and POSS for me to take a picture of him and send it to his friends. He wanted to show Han Mengtian in such a special way — The good news of the old mussel begets pearls.

▲ Tianyu brand No.38 jersey

In fact, when I first told Merrill Lynch the idea of having a child, he didn’t approve of it very much. Because we are worried that we are old, we will have no energy to train our children in the future. At my insistence, Merrill Lynch compromised and joined hands with me to realize our dream. Since the arrival of children, Merrill Lynch’s love for children has exceeded all expectations, almost to the point of playing "children".

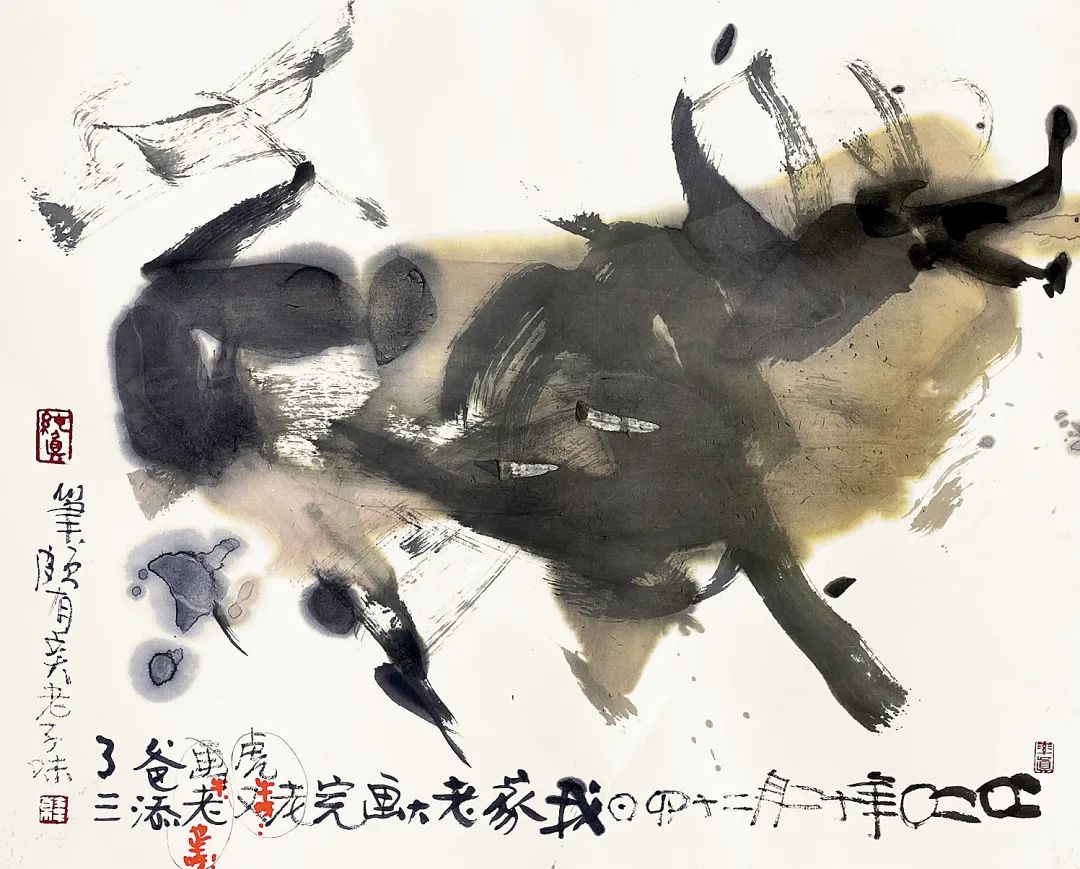

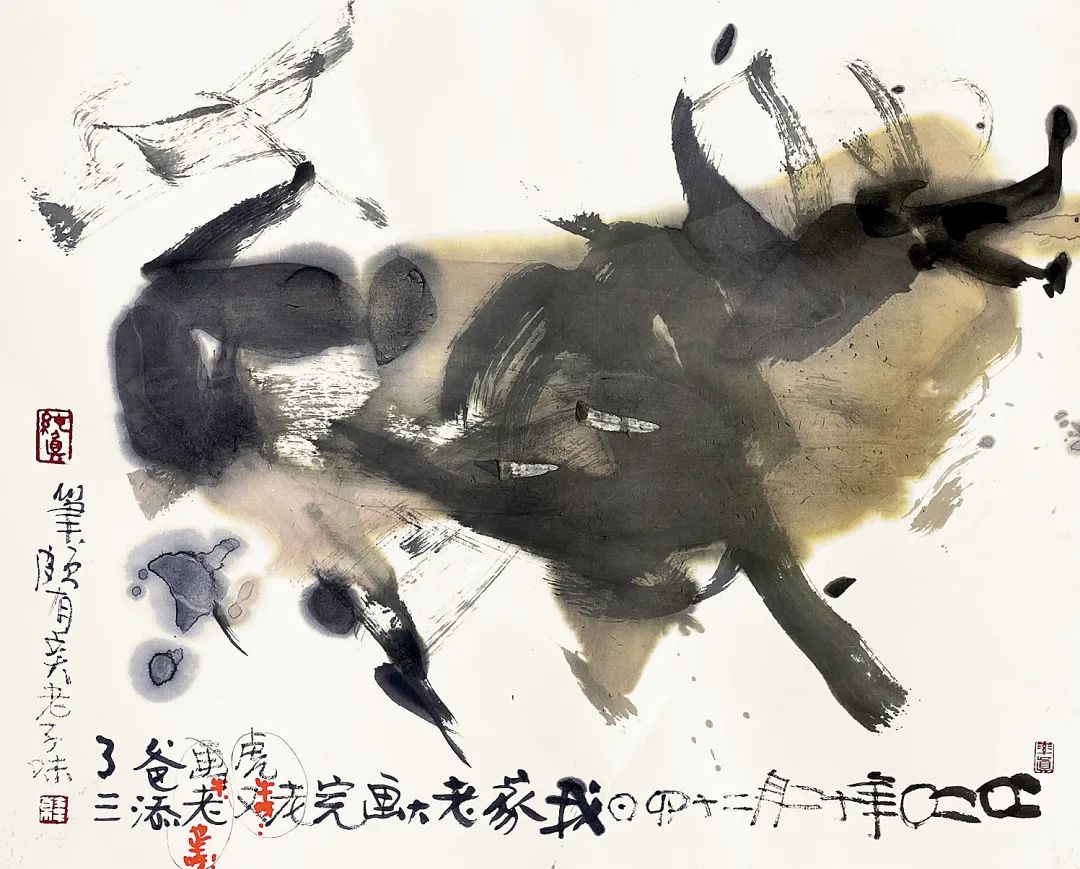

When my son’s saliva dripped on the painting that my father was creating, Merrill Lynch said, "It doesn’t matter, this is a work that my son and I created together!" " Holding my son in one hand and painting in the other, we don’t give up for such a long time. We are worried that he is too tired and want him to have a rest. Where is he willing to give up?

Sometimes I joke with Merrill Lynch: "Merrill Lynch, if you offend me one day, I will run away with my son." Merrill Lynch said, "Then you will really kill me!" Tian Yu liked the calligraphy of "being good as water" written by his father outside his door since he was a child. Whenever his son looked at these four words and giggled, Merrill Lynch always jokingly said, "Is this the first generation of Laozi?"

Once we took our son to get vaccinated, and when he was crying, he didn’t forget to say to his father, "Dad, you have my snot on you. Wipe it quickly." When the son often rides his car and lets his father chase after him, his father said after two laps, "Dad is tired and can’t chase." The son will say, "Dad, pay attention to your health!" " Said and rode away … … At this point, Merrill Lynch’s father was bursting with love. He said, "I will quit my career in the future. My career is my son."

On Tian Yu’s first birthday, Merrill Lynch wrote the word "life" to his son. Merrill Lynch said: "Life" is given by parents. Although the process is tortuous, I believe it is an act of God (Merrill Lynch stamped many seals of "God’s will" and "Good luck" under the word "life"); "Life" is in your own hands. Everyone has one life. I hope your life is complete and you live a long life (Merrill Lynch stamped many seals of "Buddha" and "longevity" under the word "life").

▲ Dad gave Tianyu a birthday present.

Maybe it’s because of genes that Tian Yu can draw before he is one year old. I’m ashamed of the sectors he painted. Since my son learned to walk, he likes to draw pictures and stamp everywhere. Merrill Lynch said to his son, "You robbed me of my job before you were one year old?" When my son was less than two years old, one day, the secretary took down an ink cow and prepared to frame it. I liked it at first sight and thought it was Merrill Lynch’s new work, but the secretary said it was painted by heaven! I can’t believe my eyes! It was not until I found the inscription on Merrill Lynch’s son’s work that I readily accepted this fact: "On December 24, 2020, my boss painted a tiger and an old horse, and my father added three strokes, which was quite old-fashioned." Merrill Lynch proudly said: "My son is either a genius or a talent, at least not a fool. It is said that it takes five children in Jewish life to produce an excellent one. My son is the fifth. " Every time I see Tian Yu’s growth, my brother always says, "How are children’s genes optimized now?"

▲ Tian Yu painted a cow when he was less than three years old.

Wang Luxiang, a teacher from Phoenix TV, said that Merrill Lynch’s artistic life reflected a new dimension because of the arrival of his youngest son. Yes, since 2018, the quantity and quality of Merrill Lynch’s works have probably set a historical record, which can be proved by the "Han Meilin Zodiac Art Exhibition" held in Wenhua Hall of the Palace Museum on January 5, 2019. In addition, Merrill Lynch has successively created stamps of Ding You Nian, Ji Hai Nian and Geng Zi Nian, which is unprecedented in the history of China Zodiac stamps.

On December 21, 2019, another special pavilion of Merrill Lynch — — Yixing Han Meilin Zisha Art Museum was successfully opened. Facing Anhui guests, Merrill Lynch said, "I suffered so much when I was young. I hope my son will not suffer in the future. I have suffered for him."

Merrill Lynch often says to friends with emotion: "My son’s arrival didn’t affect my creation, but it took my art to a higher level, which I didn’t expect. My life has come to a successful conclusion because of the arrival of my youngest son. I didn’t expect that I would achieve a positive result in my later years. "

Since Tianyu went to kindergarten, Merrill Lynch insisted on picking up her son by herself. One day, because he couldn’t see his son off, he sent his son to the gate. His son asked his father to chase his car, and his father chased it all the way out of the gate … … When Merrill Lynch walked back alone, a morning exercise grandfather shouted at Merrill Lynch: "Teacher Han is in good health!" " Merrill Lynch said shyly, "Thank you, thank you!" When I got home, Merrill Lynch said to me, "If you live in people’s hearts, you will live forever."

The "love dividend" between me and Merrill Lynch is far more than one from Xiaotian, and my eldest daughter Xiaocao and my eldest son know it. Apart from going hand in hand in their careers, the brothers and sisters also have one thing in common: the first time after Merrill Lynch and I reorganized our family, Xiaocao called me "mom" and clearly called Merrill Lynch "dad". Now the brothers and sisters especially like their younger brothers. They have the concept of caring for and educating their younger brothers, which is worth learning.

▲ With my daughter Xiaocao

When I married Merrill Lynch, Xiaocao was eighteen years old. She was a very innocent girl and looked like Merrill Lynch. Before the age of five, Xiaocao was well educated and cared for. Like today’s children, she can play piano, chess, calligraphy and painting. After the age of five, because she was separated from her mother, Merrill Lynch also neglected to train her daughter. Father and daughter live together, and Merrill Lynch is often seen carrying her daughter out on a bicycle. At that time, Merrill Lynch, who was in the prosperous period of her career, often entrusted her daughter to her aunt at home until Xiaocao went to study in Canada. After returning to China, Xiaocao has worked in the society for many years, such as making movies, running pet magazines, running a Japanese food store, being a club manager, a cultural and creative manager, etc. She has accumulated rich social experience and sometimes judged people and things more accurately than me.

▲ Know with your son.

My son came to Beijing at the age of 15. After being taught by Merrill Lynch and me, he went to the United States to study. He graduated from the University of Southern California with a bachelor’s degree in economics. After returning to China, he completed the EMBA program in Tsinghua University and obtained a master’s degree in business administration from the Chinese University of Hong Kong. At present, in addition to serving as the chairman of Beijing Tianpin Yixuan Culture and Art Co., Ltd. and deputy secretary-general of Han Meilin Art Foundation, he is still studying in Tsinghua Wudaokou Finance College for EMBA. He has grown into a modest gentleman. I worked in the investment banking department of Bank of China International (Hong Kong) and worked in the first-line IPO (initial public offering) executive team for two years, responsible for the listing of H shares and red chips of mainland enterprises in Hong Kong. Due to the financial turmoil a few years ago, Merrill Lynch and I hope our son will turn to writing to create a new field. He listened to our opinions and focused on the research and development of art derivatives and the operation of artist IP incubation. Tianpin Yixuan, founded at present, is the first company in China that focuses on the IP operation of artists and the research and development of derivatives, and has set a benchmark for IP authorized operation of domestic artists in the industry. The art derivatives launched have won unanimous praise in the industry. In the investment field, as a personal angel investor, the investment scope covers emerging industries such as TMT(TehonlogyMediaTelecon) and pan-entertainment; He has explored and invested in star enterprises in many sub-sectors, including Liangye Lighting (1.3 billion yuan sold to Bishuiyuan), Handing (GEM 300300), ICONIQ (Iconiq) new energy vehicle, bee mining machine, and Hermeng, and achieved outstanding investment performance.

▲ father and son

Since last year, the two brothers and sisters have jointly developed art IP, which has achieved good results at home and abroad.

Immediate family members should be the direct beneficiaries of my love bonus with Merrill Lynch, such as my parents. Although my father is two years older than Meilin and my mother is two years younger than Merrill Lynch, it has never affected their seniority. Every time Merrill Lynch meets her husband and mother-in-law, she always respects them and gives them a gift from Shandong people. Over the past twenty years, for my parents, Merrill Lynch has long been the pillar of our family, especially the birth of Tianyu has added a joy to our family.

My father is a very honest man, and he can’t get used to some bad manners in society. On one occasion, he learned that the owners’ committee in his community would "change the dynasty" on the last day of the year-end, and a property company with insufficient qualifications that was not recognized by everyone would be introduced to replace the existing property company whose contract expired. My father called me in a hurry and said the voice of the public. After hearing about it, Merrill Lynch took my hand and flew back to Hangzhou quickly. That night, Merrill Lynch and the owners of the community stayed in the lobby of the community until the street sent the top property to settle in first, which relieved the owners’ heart disease, which also made my parents smile.

Merrill Lynch said, as long as the parents-in-law feel comfortable, let him do anything. In order to keep my parents happy, Merrill Lynch sent a 3.5-meter-high bronze sculpture "White Horse Pride" to stand at the door of the community where my parents live. Merrill Lynch said: "Let my parents go in and out of the community every day to see the sculpture of their son-in-law."

▲ Take a photo with your parents in front of "White Horse Pride"

The stone tablet of the sculpture "White Horse Pride" is engraved with the deep affection of Hangzhou son-in-law for the Grand Canal, the people of Hangzhou and, of course, his parents-in-law. The inscription reads: "The Grand Canal has a long history and guards the prosperity of coastal towns like a jade belt. The Han Meilin Art Museum in Beijing and Hangzhou is like a shining pearl on a jade belt, telling endless love with the Grand Canal. On the occasion of the seventieth anniversary of the founding of People’s Republic of China (PRC), Han Meilin put his masterpiece — — The sculpture "White Horse Pride" was donated to the people of Hangzhou, which made this love last forever. The sculpture is made of bronze, and its modeling style is from Han and Tang Dynasties. After galloping, the hind hoof leaps in the air, and the front hoof stretches out like a leap. The overall modeling is full of rhythm and movement. The decoration of the horse body is simple and smart, which complements the shape and highlights the momentum of running the horse. The horse’s head is high and the screams seem to resound through the sky. The ponytail is different from a horse, like a dragon, and blends with the waves. "

"Pride of the White Horse" shows the spirit of the Tianma, which is brave and galloping thousands of miles. The Tianma comes from the waves and is full of vitality. It is the witness of the times when the history and reality of the Grand Canal meet and the nature and humanity blend, and it also entrusts the author with deep friendship and good wishes for the Grand Canal.

These 20 years are also the most harmonious years between Merrill Lynch and his brother and younger brother. If Merrill Lynch had no time to take care of relatives because of suffering and entrepreneurship before, then when Merrill Lynch’s career is getting better and better in the past 20 years, the first thing that comes to mind is to share its happiness with relatives.

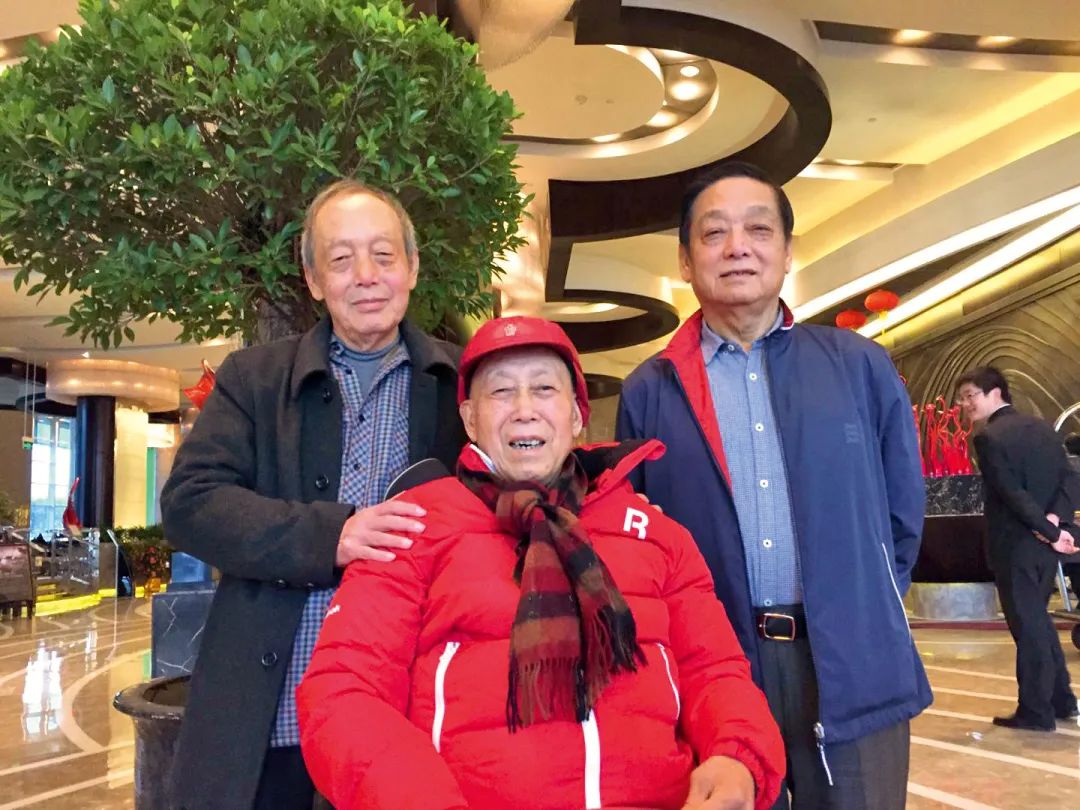

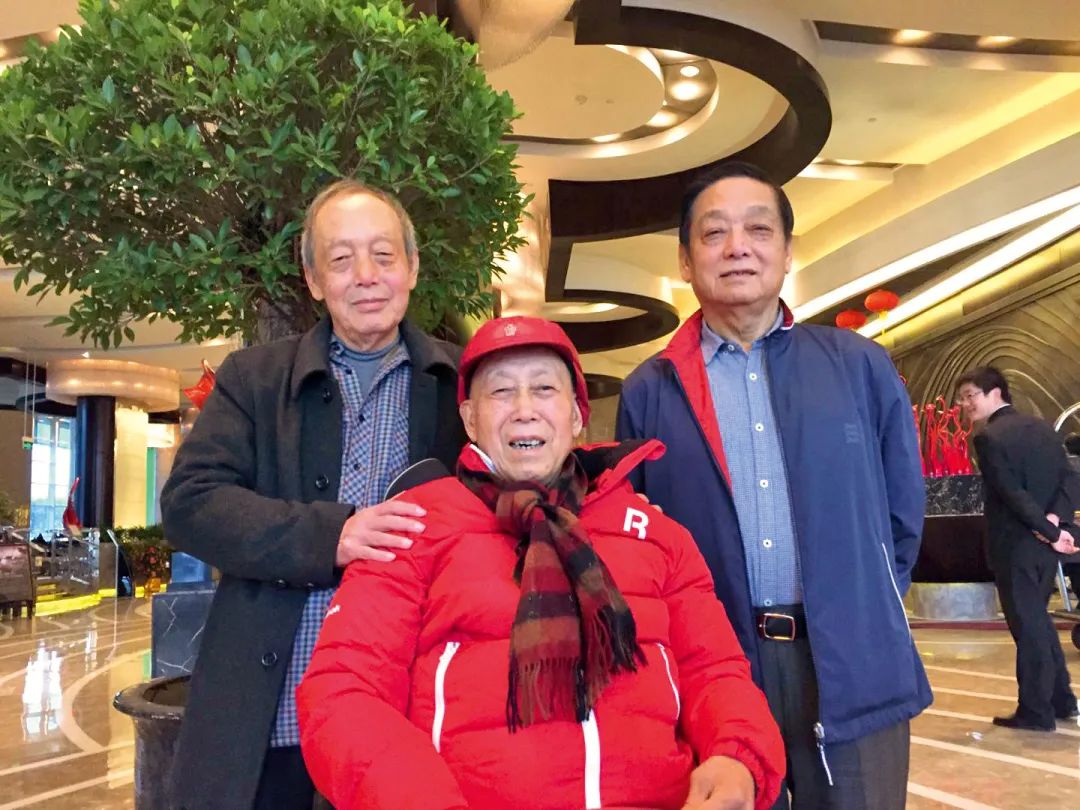

▲ Merrill Lynch three brothers

We bought houses for Merrill Lynch’s brother and younger brother respectively in the local area, and let them live a happy life with their children and grandchildren around their knees. On holidays, we will take them to Beijing, or travel to other places. When we meet the exhibition of Merrill Lynch, my brother and my whole family will come to join us. Unfortunately, our time together is still too short. Merrill Lynch’s brother and younger brother left us due to illness in 2018 and 2020 respectively.

Merrill Lynch’s eldest brother is a kind and elegant person. After the army changed jobs, he went to Shanghai Songjiang Museum as a curator. His work was serious and rigorous, but he was the shopkeeper of cutting at home, and his eldest sister-in-law did all the housework.

When I married Merrill Lynch, I didn’t tell you the news of our marriage in advance because I was afraid of gifts from my friends. At the opening ceremony of Han Meilin’s fifth solo exhibition in China Art Museum, when Merrill Lynch directly announced our marriage, we didn’t even know that Big Brother had specially prepared a wedding message in advance. It was not until that time that my eldest brother left Beijing that he gave me his own handwritten wedding message, which I have treasured so far. Now, the eldest brother has died in a crane. Looking at this wedding message, I still feel the sound in my ears:

Today is the wedding day of Jianping of Merrill Lynch. On behalf of Merrill Lynch’s relatives, I would like to thank all the leaders, relatives and friends for coming.

Merrill Lynch and Jianping have made remarkable achievements in their careers, and they have made brilliant achievements in their respective careers that even their peers are surprised by. Perhaps God has long been in love with them, making their lives full of hardships, encouraging them to work hard in adversity, exercising their will, and then letting them combine happily when they know the true meaning of life. Today, this happy moment has finally arrived. I thank Jianping and her family for marrying such an excellent bride to Merrill Lynch. This is the blessing of Merrill Lynch and the blessing of Merrill Lynch. I hope they will treat each other as guests like Liang Hong and Meng Guang and be happy forever. Here, I once again thank my friends and relatives who are here today. I propose: Let’s raise our glasses and wish Merrill Lynch Jianping a happy life. Cheers!

Han Furong Su Hongqi

Life is short. Brother, this wedding message is still appropriate today.

Merrill Lynch’s younger brother, Lao San, works in Baoding Aircraft Factory. What impressed him the most was that he especially loved playing Santa Claus. Every year before Christmas, he will take his chickens from Baoding and take a long-distance bus to Beijing to play Santa Claus and celebrate his second brother’s birthday. When I buy clothes for Merrill Lynch, I usually buy them for my eldest brother and my third son. When my third son comes home, I always ask him to put on new clothes as soon as possible. The third son in new clothes will make us happy by walking along the model steps.

Old three especially likes to eat fat meat. I’m worried about high blood fat and told him to eat less. Lao San told me that he often exercised on the railway, so I quickly called his wife and told her to watch Lao San and never let him exercise there again, which was not safe. When the third brother and the second brother Merrill Lynch don’t meet, they always think that they will pinch as soon as they meet, and sometimes they will argue over dumplings or Yuanxiao. At this time, I will criticize Merrill Lynch, and the elder brother should give way to the younger brother!

At the end of 2017, during Merrill Lynch’s 80 th Exhibition in the National Museum, Shanghai’s eldest brother was seriously ill. We asked our third and third daughter-in-law in Baoding to visit her eldest brother and sister-in-law in Shanghai first, and then we went. On the New Year’s Eve of 2018, Merrill Lynch and I rushed to Shanghai to get together with our eldest brother, eldest sister-in-law and third daughter-in-law, and had a reunion year. Unexpectedly, the third child left Shanghai and returned to Baoding, and he was also very ill. In the spring of 2019, I went to Baoding with Merrill Lynch and my eldest sister-in-law. The third child who was not very conscious took out a notebook for me. I saw that the handwriting was neat, like finishing a novel. Looking at it again, it turns out that Lao San is copying the paragraphs in my book "Never Fade". I don’t understand why Lao San copied this. Is it to practice calligraphy?

Up to now, what is indelible in my mind is that when we visited the temple fair in Beijing that year, I wore a lovely red wig for my third child and the figure of strawberries and Toona sinensis that my third child sent every spring.

Merrill Lynch’s mother asked before her death that the three brothers should unite. Today, only Merrill Lynch is left among the three United brothers.

Fortunately, there are three apprentices in Merrill Lynch who are not better sons than sons. They are Xu Dekuan, Zhao Jinxin and Lu Wuke. Xu Dekuan has been with Merrill Lynch for more than 30 years, and Zhao Jinxin and Lu Wuke have been with them for more than 20 years. They are with Merrill Lynch every day and never leave. I thank them from the bottom of my heart, because Merrill Lynch has their company, so I can open my arms and do my career. I also want to thank them for their meticulous care of Tian Yu’s younger brother. Whenever I see three brothers lying on the ground pretending to be knocked down, I feel very gratified when they are drenched by their younger brother’s water gun, because I know that playing with my brothers can better cultivate children’s masculinity.

▲ Merrill Lynch and three apprentices

Merrill Lynch will proudly say to everyone, "Do you know how sculptures in dozens of cities have been completed in recent years? It was I who brought these three apprentices! " It is puzzling that these three apprentices are supposed to be with Merrill Lynch longer than I am, but they are all a little afraid of their teachers, which is probably "strict teachers make excellent students". In art, teachers are always hard on students. However, in life, Merrill Lynch treated them like parents. When our economy was not well off, Merrill Lynch bought a house and a car for Xu Dekuan, and then bought a house for Zhao Jinxin and Lu Wuke, so that their whole family could live in Beijing without worry. In addition, if the disciples have things at home, such as sick relatives and children going to school, we will take care of them.

In fact, Han Meilin Studio used to be very powerful. Before Merrill Lynch, there were many apprentices. Some of them thrived under the training of Merrill Lynch, made great achievements and started their own businesses. Now they are also famous in the society, such as Ji Feng and Wang Zhigang. Some were naturally eliminated because they were not enterprising and the pattern was too small, and some even embarked on the road of no return … …

Everyone knows that there is a housekeeper Chen in our family. Her name is Chen Lihua. I have been married to Merrill Lynch for 20 years, and she has been with us for 20 years. At first, she was the lawyer of a friend of Merrill Lynch. At that time, because the cause of Merrill Lynch was in urgent need of a lawyer, Lihua took on this role naturally.

Lihua is a talented student in northwest university of politics and law. She grew up in Yinchuan, the second child in her family. She is a talented woman who is both rational and emotional. Maybe Lihua and I are both Sagittarius. We hit it off in all aspects, and Sagittarius has a good relationship with Capricorn, so we all hit it off with Merrill Lynch. As a result, the pattern of "three people, there must be my teacher" was formed. We often "choose the good ones and follow them, and change the bad ones". In this way, we have come to this day hand in hand.

At that time, there was no Han Meilin Art Museum. Except for some works by Merrill Lynch, we basically started from scratch. My father is the director of the Personnel Department of Zhejiang Post and Telecommunications Administration, and he is very strict in dealing with people. When I went north, my father warned me that it was best to clear up the old accounts at home and start from scratch. So, Lihua and I sorted out the accounts owed by others to Merrill Lynch and those owed by Merrill Lynch. At that time, I realized that a great artist’s family is so thin that I need to take out my savings from Hangzhou when I get a bonus at the end of the year. Therefore, Lihua and I worked hard to create a new situation in Merrill Lynch’s art career.

Over the past 20 years, we have made every effort to carry forward Merrill Lynch’s art. Numerous urban sculptures have been erected throughout the country, four Han Meilin Art Museums have been established, the Han Meilin Art Foundation has been established, many primary schools have been built, and many Merrill Lynch classrooms … … Lihua and I are golden partners. She is a lawyer and cautious, while I am bold and decisive. When I am in the front, she always cleans up the mess for me in the rear, and has no regrets.

▲ 20 years of wind and rain with Chen Guanjia

I remember that when we demonstrated the construction of the first two Han Meilin art galleries, our opinions differed. My optimism about the problem and her pessimism about the problem form two distinct camps. And my character is often not to persuade her step by step, but to show off to her after actual combat. In the end, she will sincerely feel sorry for my efforts while accepting my victory. So that after the construction of two Han Meilin art galleries, she chose to believe and fight alongside me.

Ten years ago, we started planning to establish the Han Meilin Art Foundation. When Lihua learned that the initial capital was 20 million RMB, the work was delayed. Until one day, I hit home Lihua’s mind. I said, "Lihua, isn’t it that 20 million yuan will be injected into the foundation account, and the future will be national and cannot be reversed?" I am willing! " As a result, Lihua officially started the foundation registration process. There is another unforgettable thing. On the day of the first "Han Meilin Day" on December 21st, 2013, Lihua couldn’t go back to Yinchuan to see her father off because she wanted to organize activities. I still can’t forgive myself for this.

In order to facilitate her work, many years ago, Lihua became a semi-retired lawyer. She moved her family to a place close to us and devoted most of her energy to Merrill Lynch’s career. Many jobs of Han Meilin Art Foundation and Han Meilin Art Museum cannot be separated from her. Her small home is decorated like a small art salon, which is sure to win the favor of her colleagues.

Can a senior lawyer "rebel" into a senior art practitioner, isn’t this a love bonus for me and Merrill Lynch?

Introduction to the author of Just in Time

Zhou Jianping, a screenwriter, was born in 1964 and graduated from the first writer’s class of Chinese Department of Zhejiang University. At present, he is the chief curator of Han Meilin Art Museum in four places (Hangzhou, Beijing, Yinchuan and Yixing) and the secretary general of Han Meilin Art Foundation. He used to be vice chairman and secretary general of Zhejiang Film Association, director of large-scale activities of China Film Association, director of Beijing Youth Development Foundation, director of China Tianhan Foundation, director of China Film Foundation, director of China Xia Yan Literature Society and director of China Film Producers Association. He has created more than 2 million words of movies, TV series, novels and reportage, and published them in major publications all over the country. Among them, the screenplay "penitentiary angel" was put on the screen by the famous director Xie Jin. In 1996, the film won the highest honor award in the third Beijing University Student Film Festival and was selected as the fourth World Women’s Congress of the United Nations. Since 2002, he has been the general planner of the award ceremony of China Golden Rooster and Hundred Flowers Film Festival for 13 consecutive years.